The Fundamentals Behind Modern Scientific Cameras

Scientific cameras are essential for taking images of scientific research to understand the phenomena surrounding us. A key aspect of scientific cameras is that they are quantitative, with each camera measuring the number of photons (light particles) which interact with the detector.

Each scientific camera has a sensor which detects and counts photons (light particles) which are emitted in the UV-VIS-IR region. These photons are usually emitted from the sample.

Sensor Technology

CCD Sensors: The Basics

CCD sensors are silicon based semiconductors which are able to capture photons and convert them into photoelectrons. This is converted into a digital signal and read out by the camera. There are multiple enhancements for CCD sensors that make them sensitive to different wavelength ranges.

EMCCD Sensors: The Basics

Electron multiplying CCD (EMCCD) sensors are a variant of silicon based CCDs which are able to capture a low number of photons and convert them into a high signal. These sensors have on-chip amplification which increases photoelectron count and therefore signal.

ICCD and emICCD Sensors: The Basics

Intensified CCD (ICCD) sensors are another variant of a silicon-based CCD but with the addition of an intensifier. The intensifier allows for nanosecond gating optimal for ultra-low exposure time applications.

InGaAs Sensors: The Basics

InGaAs focal plane array sensors are semiconductors which are optimized for shortwave near-infrared wavelengths (900-1700 nm). They consist of an InP substrate, and InGaAs absorption layer, and an ultrathin InP cap.

sCMOS Sensors: The Basics

Scientific CMOS (sCMOS) cameras are scientific complementary metal-oxide-semiconductors that provide low noise, alongside high speed, high quantum efficiency and high resolution. sCMOS sensors use parallelization to image at much higher speeds.

Camera Basics

Quantum Efficiency

Quantum efficiency is the percentage of photons that an imaging device can convert into electrons. It is dependent on the material of the sensor alongside the wavelength of light being detected.

Scientific Camera Noise Sources

Noise within an image is caused by a random variation of charge within the camera, resulting in changes to brightness or color. There are multiple sources of noise, as it can be produced by the sensor, camera electronics, temperature or via natural fluctuation.

Signal to Noise Ratio

The signal to noise ratio (SNR) is the relationship between the signal and the noise generated within a pixel. It is calculated by dividing the total detected number of photons by the total noise.

Full Well Capacity and Pixel Saturation

Full well capacity is the amount of charge that can be stored within a pixel before saturation. At this point the well is full and no more charge can be stored.

Pixel Size and Camera Resolution

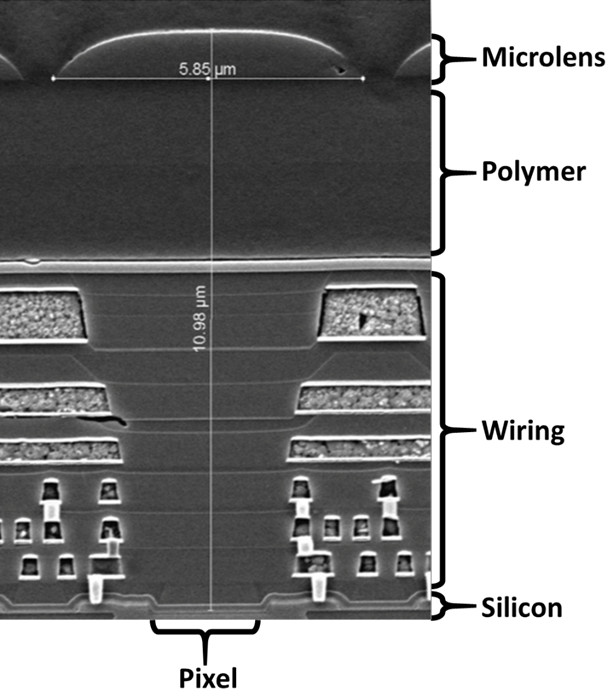

Pixel size influences both the sensitivity and resolution of the sensor, with smaller pixels providing higher resolution, but larger sensors offering higher sensitivity. However, this is not the only factor. Lens aperture, magnification, Rayleigh Criterion and Nyquist limit also contribute to the overall camera and lens resolution.

Field of View and Angular Field of View

Field of view is the maximum area of a sample that a camera can image. It is determined by the focal length of the camera lens and the size of the sensor. The angular field of view is determined by the focal length and is defined as the angle between any light on the horizontal, and any light captured at the edge of an object.

Gain

The gain relates the number of photoelectrons released to the gray levels displayed. It is dependent on the voltage amplifier during readout and can be used to enhance contrast for low-light imaging.

Camera Enhancements

Sensor Improvements to Enhance UV Sensitivity from 10 – 400 nm

UV light can be categorized into many subcategories, all of which require different sensor enhancements. Photons with a wavelength below 200 nm require enhancements which change the sensor physically, and photons with wavelengths between 400-200 nm require both physical and chemical changes to the sensor.

Photon Conversion and Anti-Reflection Coatings: Improving UV Sensitivity Above 200 nm

UV light with wavelengths between 400 – 200 nm require sensors which have undergone improvement processes. These processes either perform photon conversion, downconverting the UV photons to visible light, or reduce the reflectivity of the silicon sensor to improve QE.